Detailed changes

@@ -114,7 +114,13 @@ The agent can search your codebase to find relevant context, but providing it ex

Add context by typing `@` in the message editor.

You can mention files, directories, symbols, previous threads, rules files, and diagnostics.

-Copying images and pasting them in the panel's message editor is also supported.

+> **Changed in Preview (v0.225).** See [release notes](/releases#0.225).

+

+### Images

+

+You can add images to agent messages on providers that support vision models. OpenAI GPT-4o and later, Anthropic Claude 3 and later, Google Gemini 1.5 and 2.0, and Bedrock vision models (Claude 3+, Amazon Nova Pro and Lite, Meta Llama 3.2 Vision, Mistral Pixtral) all support image inputs.

+

+To add an image, use the `/file` slash command and select an image file, or drag an image from your file system directly into the agent panel message editor. You can also copy an image and paste it into the message editor.

When you paste multi-line code selections copied from a buffer, Zed automatically formats them as @-mentions with the file context.

To paste content without this automatic formatting, use {#kb agent::PasteRaw} to paste raw text directly.

@@ -168,7 +174,9 @@ You can explore the exact tools enabled in each profile by clicking on the profi

Alternatively, you can also use either the command palette, by running {#action agent::ManageProfiles}, or the keybinding directly, {#kb agent::ManageProfiles}, to have access to the profile management modal.

-Use {#kb agent::CycleModeSelector} to switch between profiles without opening the modal.

+> **Preview:** This keybinding is available in Zed Preview. It will be included in the next Stable release.

+

+Use {#kb agent::CycleModeSelector} to cycle through available profiles without opening the modal.

#### Custom Profiles {#custom-profiles}

@@ -290,20 +290,21 @@ See the [Tool Permissions](./tool-permissions.md) documentation for more example

> **Note:** Before Zed v0.224.0, tool approval was controlled by the `agent.always_allow_tool_actions` boolean (default `false`). Set it to `true` to auto-approve tool actions, or leave it `false` to require confirmation for edits and tool calls.

-### Single-file Review

+### Edit Display Mode

-Control whether to display review actions (accept & reject) in single buffers after the agent is done performing edits.

-The default value is `true`.

+> **Changed in Preview (v0.225).** See [release notes](/releases#0.225).

+

+By default, agent edits open in multi-file review mode. To display agent edits in single-file editors instead, enable `single_file_review`:

```json [settings]

{

"agent": {

- "single_file_review": false

+ "single_file_review": true

}

}

```

-When set to `false`, these controls are only available in the multibuffer review tab.

+When enabled, each file modified by an agent opens in its own editor tab for review. When disabled (default), all changes appear in a unified review interface.

### Sound Notification

@@ -406,13 +406,49 @@ After adding your API key, Codestral will appear in the provider dropdown in the

### Self-Hosted OpenAI-compatible servers

-To configure Zed to use an arbitrary server for edit predictions:

+> **Preview:** This feature is available in Zed Preview. It will be included in the next Stable release.

-1. Open the Settings Editor (`Cmd+,` on macOS, `Ctrl+,` on Linux/Windows)

-2. Search for "Edit Predictions" and click **Configure Providers**

-3. Find the "OpenAI-compatible API" section and enter the URL and model name. You can also select a prompt format that Zed should use. Zed currently supports several FIM prompt formats, as well as Zed's own Zeta prompt format. If you do not select a prompt format, Zed will attempt to infer it from the model name.

+You can use any self-hosted server that implements the OpenAI completion API format. This works with vLLM, llama.cpp server, LocalAI, and other compatible servers.

+

+#### Configuration

+

+Set `open_ai_compatible_api` as your provider and configure the API endpoint:

+

+```json [settings]

+{

+ "edit_predictions": {

+ "provider": "open_ai_compatible_api",

+ "open_ai_compatible_api": {

+ "api_url": "http://localhost:8080/v1/completions",

+ "model": "deepseek-coder-6.7b-base",

+ "prompt_format": "deepseek_coder",

+ "max_output_tokens": 64

+ }

+ }

+}

+```

+

+The `prompt_format` setting controls how code context is formatted for the model. Use `"infer"` to detect the format from the model name, or specify one explicitly:

+

+- `code_llama` - CodeLlama format: `<PRE> prefix <SUF> suffix <MID>`

+- `star_coder` - StarCoder format: `<fim_prefix>prefix<fim_suffix>suffix<fim_middle>`

+- `deepseek_coder` - DeepSeek format with special unicode markers

+- `qwen` - Qwen/CodeGemma format: `<|fim_prefix|>prefix<|fim_suffix|>suffix<|fim_middle|>`

+- `codestral` - Codestral format: `[SUFFIX]suffix[PREFIX]prefix`

+- `glm` - GLM-4 format with code markers

+- `infer` - Auto-detect from model name (default)

-The URL must accept requests according to OpenAI's [Completions API](https://developers.openai.com/api/reference/resources/completions/methods/create)

+Your server must implement the OpenAI `/v1/completions` endpoint. Edit predictions will send POST requests with this format:

+

+```json

+{

+ "model": "your-model-name",

+ "prompt": "formatted-code-context",

+ "max_tokens": 256,

+ "temperature": 0.2,

+ "stop": ["<|endoftext|>", ...]

+}

+```

## See also

@@ -151,7 +151,9 @@ For the most up-to-date supported regions and models, refer to the [Supported Mo

#### Extended Context Window {#bedrock-extended-context}

-Anthropic models on Bedrock support a [1M token extended context window](https://docs.anthropic.com/en/docs/build-with-claude/extended-context) beta. To enable this feature, add `"allow_extended_context": true` to your Bedrock configuration:

+> **Preview:** This feature is available in Zed Preview. It will be included in the next Stable release.

+

+Anthropic models on Bedrock support a 1M token extended context window through the `anthropic_beta` API parameter. To enable this feature, set `"allow_extended_context": true` in your Bedrock configuration:

```json [settings]

{

@@ -166,9 +168,13 @@ Anthropic models on Bedrock support a [1M token extended context window](https:/

}

```

-When enabled, Zed will include the `anthropic_beta` field in requests to Bedrock, enabling the 1M token context window for supported Anthropic models such as Claude Sonnet 4.5 and Claude Opus 4.6.

+Zed enables extended context for supported models (Claude Sonnet 4.5 and Claude Opus 4.6). Extended context usage may increase API costs—refer to AWS Bedrock pricing for details.

+

+#### Image Support {#bedrock-image-support}

+

+> **Preview:** This feature is available in Zed Preview. It will be included in the next Stable release.

-> **Note**: Extended context usage may incur additional API costs. Refer to your AWS Bedrock pricing for details.

+Bedrock models that support vision (Claude 3 and later, Amazon Nova Pro and Lite, Meta Llama 3.2 Vision models, Mistral Pixtral) can receive images in conversations and tool results. To send an image, use the slash command `/file` followed by an image path, or drag an image directly into the agent panel.

### Anthropic {#anthropic}

@@ -303,6 +309,15 @@ Here is an example of a custom Google AI model you could add to your Zed setting

"language_models": {

"google": {

"available_models": [

+ {

+ "name": "gemini-3.1-pro-preview",

+ "display_name": "Gemini 3.1 Pro",

+ "max_tokens": 1000000,

+ "mode": {

+ "type": "thinking",

+ "budget_tokens": 24000

+ }

+ },

{

"name": "gemini-3-flash-preview",

"display_name": "Gemini 3 Flash (Thinking)",

@@ -614,6 +629,25 @@ The OpenRouter API key will be saved in your keychain.

Zed will also use the `OPENROUTER_API_KEY` environment variable if it's defined.

+> **Changed in Preview (v0.225).** See [release notes](/releases#0.225).

+

+When using OpenRouter as your assistant provider, you must explicitly select a model in your settings. OpenRouter no longer provides a default model selection.

+

+Configure your preferred OpenRouter model in `settings.json`:

+

+```json [settings]

+{

+ "agent": {

+ "default_model": {

+ "provider": "openrouter",

+ "model": "openrouter/auto"

+ }

+ }

+}

+```

+

+The `openrouter/auto` model automatically routes your requests to the most appropriate available model. You can also specify any model available through OpenRouter's API.

+

#### Custom Models {#openrouter-custom-models}

You can add custom models to the OpenRouter provider by adding the following to your Zed settings file ([how to edit](../configuring-zed.md#settings-files)):

@@ -86,6 +86,18 @@ Once installation is complete, you can return to the Agent Panel and start promp

How reliably MCP tools get called can vary from model to model.

Mentioning the MCP server by name can help the model pick tools from that server.

+#### Error Handling

+

+> **Changed in Preview (v0.225).** See [release notes](/releases#0.225).

+

+When a context server encounters an error while processing a tool call, the agent receives the error message directly and the operation fails. Common error scenarios include:

+

+- Invalid parameters passed to the tool

+- Server-side failures (database connection issues, rate limits)

+- Unsupported operations or missing resources

+

+The error message from the context server will be shown in the agent's response, allowing you to diagnose and correct the issue. Check the context server's logs or documentation for details about specific error codes.

+

If you want to _ensure_ a given MCP server will be used, you can create [a custom profile](./agent-panel.md#custom-profiles) where all built-in tools (or the ones that could cause conflicts with the server's tools) are turned off and only the tools coming from the MCP server are turned on.

As an example, [the Dagger team suggests](https://container-use.com/agent-integrations#zed) doing that with their [Container Use MCP server](https://zed.dev/extensions/mcp-server-container-use):

@@ -43,6 +43,8 @@ Zed's plans offer hosted versions of major LLMs with higher rate limits than dir

| | OpenAI | Cached Input | $0.005 | $0.0055 |

| Gemini 3.1 Pro | Google | Input | $2.00 | $2.20 |

| | Google | Output | $12.00 | $13.20 |

+| Gemini 3.1 Pro | Google | Input | $2.00 | $2.20 |

+| | Google | Output | $12.00 | $13.20 |

| Gemini 3 Pro | Google | Input | $2.00 | $2.20 |

| | Google | Output | $12.00 | $13.20 |

| Gemini 3 Flash | Google | Input | $0.30 | $0.33 |

@@ -68,7 +70,7 @@ As of February 19, 2026, Zed Pro serves newer model versions in place of the ret

- Claude Sonnet 4 → Claude Sonnet 4.5 or Claude Sonnet 4.6

- Claude Sonnet 3.7 (retired Feb 19) → Claude Sonnet 4.5 or Claude Sonnet 4.6

- GPT-5.1 and GPT-5 → GPT-5.2 or GPT-5.2 Codex

-- Gemini 2.5 Pro → Gemini 3 Pro

+- Gemini 2.5 Pro → Gemini 3 Pro or Gemini 3.1 Pro

- Gemini 2.5 Flash → Gemini 3 Flash

## Usage {#usage}

@@ -19,3 +19,32 @@ The Collaboration Panel has two sections:

> **Warning:** Sharing a project gives collaborators access to your local file system within that project. Only collaborate with people you trust.

See the [Data and Privacy FAQs](https://zed.dev/faq#data-and-privacy) for more details.

+

+## Audio Settings {#audio-settings}

+

+### Selecting Audio Devices

+

+> **Preview:** This feature is available in Zed Preview. It will be included in the next Stable release.

+

+You can select specific input and output audio devices instead of using system defaults. To configure audio devices:

+

+1. Open {#kb zed::OpenSettings}

+2. Navigate to **Collaboration** > **Experimental**

+3. Use the **Output Audio Device** and **Input Audio Device** dropdowns to select your preferred devices

+

+Changes take effect immediately. If you select a device that becomes unavailable, Zed falls back to system defaults.

+

+To test your audio configuration, click **Test Audio** in the same section. This opens a window where you can verify your microphone and speaker work correctly with the selected devices.

+

+**JSON configuration:**

+

+```json [settings]

+{

+ "audio": {

+ "experimental.output_audio_device": "Device Name (device-id)",

+ "experimental.input_audio_device": "Device Name (device-id)"

+ }

+}

+```

+

+Set either value to `null` to use system defaults.

@@ -136,6 +136,10 @@ Not all languages in Zed support toolchain discovery and selection, but for thos

### Configuring Language Servers

+> **Changed in Preview (v0.225).** See [release notes](/releases#0.225).

+

+When configuring language servers in your `settings.json`, autocomplete suggestions include all available LSP adapters recognized by Zed, not only those currently active for loaded languages. This helps you discover and configure language servers before opening files that use them.

+

Many language servers accept custom configuration options. You can set these in the `lsp` section of your `settings.json`:

```json [settings]

@@ -163,6 +163,16 @@ Some debug adapters (e.g. CodeLLDB and JavaScript) will also _verify_ whether yo

All breakpoints enabled for a given project are also listed in "Breakpoints" item in your debugging session UI. From "Breakpoints" item in your UI you can also manage exception breakpoints.

The debug adapter will then stop whenever an exception of a given kind occurs. Which exception types are supported depends on the debug adapter.

+## Working with Split Panes

+

+> **Changed in Preview (v0.225).** See [release notes](/releases#0.225).

+

+When debugging with multiple split panes open, Zed shows the active debug line in one pane and preserves your layout in others. If you have the same file open in multiple panes, the debugger picks a pane where the file is already the active tab—it won't switch tabs in panes where the file is inactive.

+

+Once the debugger picks a pane, it continues using that pane for subsequent breakpoints during the session. If you drag the tab with the active debug line to a different split, the debugger tracks the move and uses the new pane.

+

+This ensures the debugger doesn't disrupt your workflow when stepping through code across different files.

+

## Settings

The settings for the debugger are grouped under the `debugger` key in `settings.json`:

@@ -86,6 +86,36 @@ For benchmarking unit tests, annotate them with the `#[perf]` attribute from the

perf-test -p $CRATE` to benchmark them. See the rustdoc documentation on `crates/util_macros` and `tooling/perf` for

in-depth examples and explanations.

+## ETW Profiling on Windows

+

+> **Changed in Preview (v0.225).** See [release notes](/releases#0.225).

+

+Zed supports Event Tracing for Windows (ETW) to capture detailed performance data. You can record CPU, GPU, disk I/O, and file I/O activity, with optional heap allocation tracking.

+

+### Recording a trace

+

+Open the command palette and run:

+

+- **`etw_tracing: Record Etw Trace`** — Records CPU, GPU, and I/O activity

+- **`etw_tracing: Record Etw Trace With Heap Tracing`** — Includes heap allocation data for the Zed process

+

+Zed prompts you to choose a save location for the `.etl` trace file.

+

+### Saving or canceling

+

+While recording:

+

+- **`etw_tracing: Save Etw Trace`** — Stops recording and saves the trace to disk

+- **`etw_tracing: Cancel Etw Trace`** — Stops recording without saving

+

+Zed buffers trace data in memory. Recordings automatically save after 60 seconds if you don't manually stop them.

+

+### Analyzing traces

+

+Open `.etl` files with [Windows Performance Analyzer](https://learn.microsoft.com/en-us/windows-hardware/test/wpt/windows-performance-analyzer) to inspect CPU stacks, GPU usage, disk I/O patterns, and heap allocations.

+

+**Note for existing keybindings**: The `etw_tracing::StopEtwTrace` action was renamed to `etw_tracing::SaveEtwTrace`. Update any custom keybindings.

+

## Contributor links

- [CONTRIBUTING.md](https://github.com/zed-industries/zed/blob/main/CONTRIBUTING.md)

@@ -11,6 +11,14 @@ This guide covers the essential commands, environment setup, and navigation basi

## Quick Start

+### Welcome Page

+

+> **Changed in Preview (v0.225).** See [release notes](/releases#0.225).

+

+When you open Zed without a folder, you see the welcome page in the main editor area. The welcome page offers quick actions to open a folder, clone a repository, or view documentation. Once you open a folder or file, the welcome page disappears. If you split the editor into multiple panes, the welcome page appears only in the center pane when empty—other panes show a standard empty state.

+

+To reopen the welcome page, close all items in the center pane or use the command palette to search for "Welcome".

+

### 1. Open a Project

Open a folder from the command line:

@@ -7,7 +7,9 @@ description: Navigate code structure with Zed's outline panel. View symbols, jum

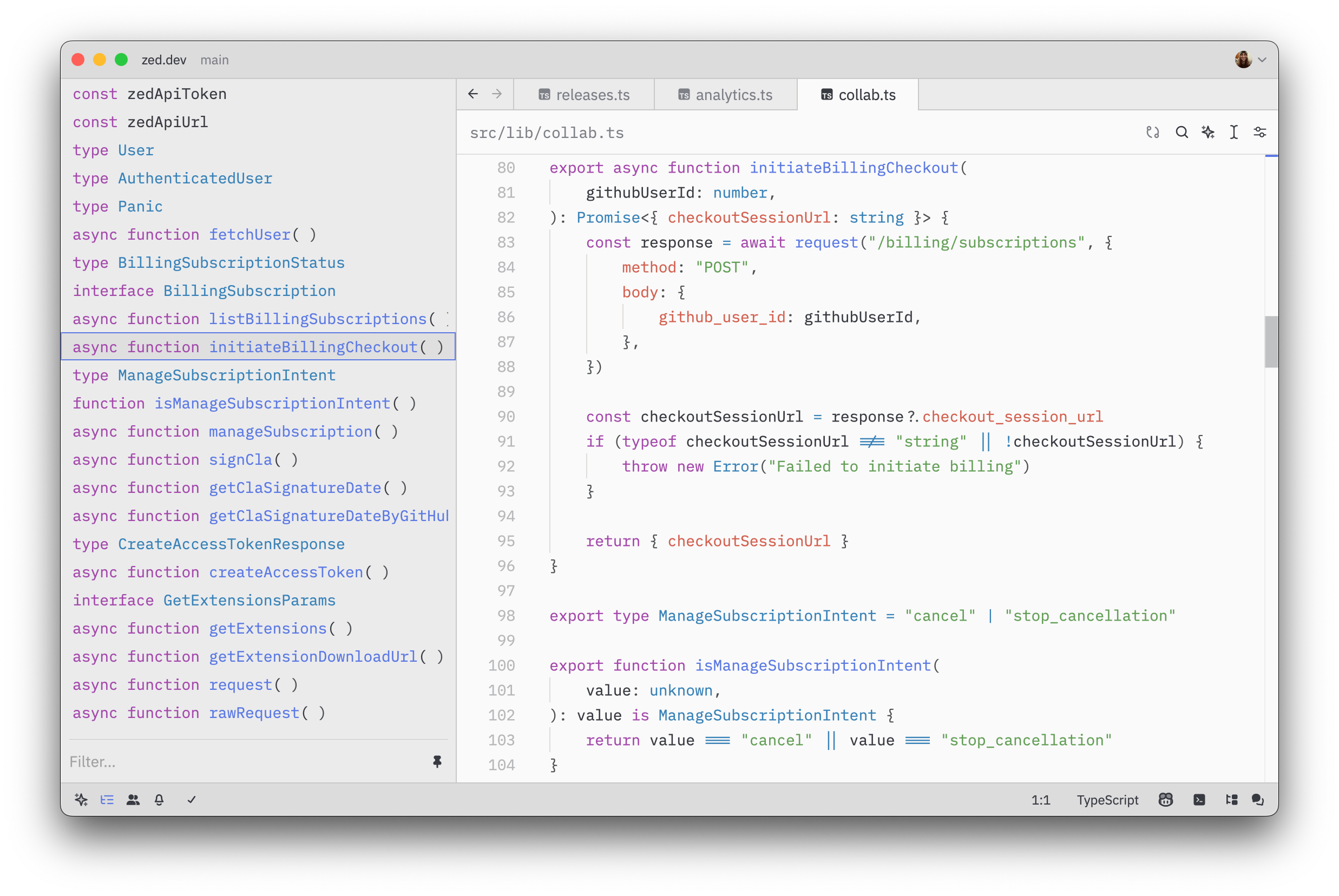

In addition to the modal outline (`cmd-shift-o`), Zed offers an outline panel. The outline panel can be deployed via `cmd-shift-b` (`outline panel: toggle focus` via the command palette), or by clicking the `Outline Panel` button in the status bar.

-When viewing a "singleton" buffer (i.e., a single file on a tab), the outline panel works similarly to that of the outline modal-it displays the outline of the current buffer's symbols, as reported by tree-sitter. Clicking on an entry allows you to jump to the associated section in the file. The outline view will also automatically scroll to the section associated with the current cursor position within the file.

+> **Changed in Preview (v0.225).** See [release notes](/releases#0.225).

+

+When viewing a "singleton" buffer (i.e., a single file on a tab), the outline panel works similarly to that of the outline modal-it displays the outline of the current buffer's symbols. Each symbol entry shows its type prefix (such as "struct", "fn", "mod", "impl") along with the symbol name, helping you quickly identify what kind of symbol you're looking at. Clicking on an entry allows you to jump to the associated section in the file. The outline view will also automatically scroll to the section associated with the current cursor position within the file.

@@ -223,6 +223,37 @@ This could be useful for launching a terminal application that you want to use i

}

```

+## VS Code Task Format

+

+> **Preview:** This feature is available in Zed Preview. It will be included in the next Stable release.

+

+When importing VS Code tasks from `.vscode/tasks.json`, you can omit the `label` field. Zed automatically generates labels based on the task type:

+

+- **npm tasks**: `npm: <script>` (e.g., `npm: start`)

+- **gulp tasks**: `gulp: <task>` (e.g., `gulp: build`)

+- **shell tasks**: Uses the `command` string directly (e.g., `echo hello`), or `shell` if the command is empty

+- **Tasks without type**: `Untitled Task`

+

+Example task file with auto-generated labels:

+

+```json

+{

+ "version": "2.0.0",

+ "tasks": [

+ {

+ "type": "npm",

+ "script": "start"

+ },

+ {

+ "type": "shell",

+ "command": "cargo build --release"

+ }

+ ]

+}

+```

+

+These tasks appear in the task picker as "npm: start" and "cargo build --release". You can override the generated label by providing an explicit `label` field.

+

## Binding runnable tags to task templates

Zed supports overriding the default action for inline runnable indicators via workspace-local and global `tasks.json` file with the following precedence hierarchy:

@@ -83,3 +83,24 @@ If your issue persists after regenerating the database, please [file an issue](h

## Language Server Issues

If you're experiencing language-server related issues, such as stale diagnostics or issues jumping to definitions, restarting the language server via {#action editor::RestartLanguageServer} from the command palette will often resolve the issue.

+

+## Agent Error Messages

+

+### "Max tokens reached"

+

+> **Preview:** This error handling is available in Zed Preview. It will be included in the next Stable release.

+

+You see this error when the agent's response exceeds the model's maximum token limit. This happens when:

+

+- The agent generates an extremely long response

+- The conversation context plus the response exceeds the model's capacity

+- Tool outputs are large and consume the available token budget

+

+**To resolve this:**

+

+1. Start a new thread to reduce context size

+2. Use a model with a larger token limit in AI settings

+3. Break your request into smaller, focused tasks

+4. Clear tool outputs or previous messages using the thread controls

+

+The token limit varies by model—check your model provider's documentation for specific limits.